Empathy is the ability to understand and share the emotions of another. Humans are capable of empathizing with their friends, family, and even complete strangers. The Bible says in Romans 12:15 to rejoice with those who rejoice and weep with those who weep, therefore we know that empathy is a biblical trait to be sought after. But what happens when the enemy uses our God-given ability to empathize against us? What happens when they extend that to empathizing with machines and robots?

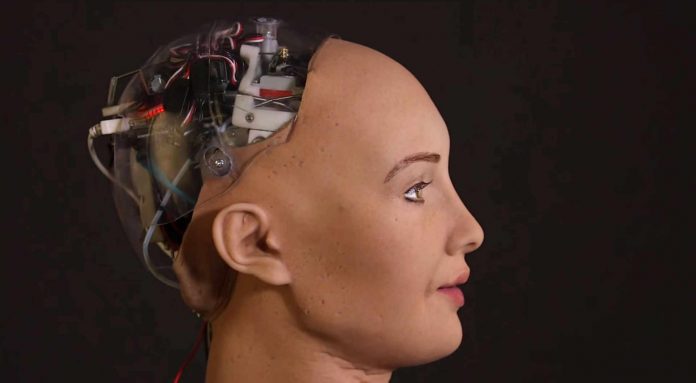

[fvplayer src=”https://player.vimeo.com/external/254563275.hd.mp4?s=3e72f22525b928abc43d53b6f8eb92ab78a37ac9&profile_id=174″ splash=”https://christianjournal.net/wp-content/uploads/2018/02/Sophie.jpg”]Researchers in Japan studied just that, and their findings are disturbing. Using a fMRI, they examined neural correlates of empathy towards robots. By showing videos of a human, a robot and an unanimated object, being treated either lovingly or violently, they found that violent interaction towards both the robot and the human resulted in similar reactions. The brain was activated in the same areas indicating that violence toward a robot and human elicit similar emotional reactions. Fortunately, participants did show slightly more empathetic concern for the human than the robot. This shows that some humans already have empathy towards robots naturally, just one problem, robots can’t empathize with humans.

Technologists believe that they can make robots empathize, but can they really? How can you teach emotions? How can you grant a metal or plastic object feeling and life? You can’t, it will always be artificial, no matter how authentic it may seem. But that doesn’t the stop tech giants from trying.

The beginning stages of granting “empathy” to robots are already underway. Honda just unveiled their attempt called 3E-A18 an “empathetic robotic device designed to explore the emotional connection between machines and humans.” The devices plastic digital face is programmed to examine human facial expressions and respond by showing emotions on its screen either smiling or frowning. Sitting atop an omnidirectional driving wheel, the A18 has a rounded, egg-like shape with a soft exterior skin that “invites people to touch or hug the robot,” according to Honda. Hondas stated goal is to support people by serving as a guide in public or giving comfort to humans in distress.

Pepper is a similar robot, a four-foot tall humanoid that is intended for employment. Sprint hired Pepper for customer relations within their retail stores. Sprint Business President Jan Geldmacher explained,

“Pepper is a great member of our staff and we deploy Pepper right now to our retail stores at Sprint and Pepper actually is a robot that can manage to read the mood of our customers by using its sensors. So, Pepper sees and reads if people are happy or if people are angry and can act accordingly.”

This comes around the same time that Pepper got fired for incompetence from a grocery chain only a week after being hired. Apparently, the humanoid was assigned to pass out samples of sausage, but only two customers accepted from the robot compared to 12 customers who accepted a sausage sample from a human employee. Customers explained that they were scared of the knife-yielding android and decided to stay away.

Of the many faults in emotion reading ai, one is that it can only pick up on the six basic expressions (angry, sad, fear, disgust, surprise and happy) that are universal across cultures. But social emotions such as embarrassment, pride, or flirtation are unable to be decoded by the artificial intelligence.

Human empathy gives us the ability to pick up on the undertones of emotions in situations. For example, someone may be feeling angry but learn to cover the anger with a smile for social acceptance. The understanding that we have for one another cannot be copied and programmed into a computer.

A professor of cognitive robotics at Imperial London College, Murray Shanahan, warns that if we don’t program the necessary safeguards into AI to ensure that it stays friendly, it will be our ruin.

That’s why he, Elon Musk, and Stephen Hawking together penned an open letter to the Centre for the Study of Existential Risk (CSER), promoting more research in AI to discover “potential pitfalls.” In the letter, Musk says that AI could become more dangerous than nuclear weapons, while Hawking says it could spell the end of the human race. Shanahan suggests creating artificial general intelligence (AGI) with a human-like psychological framework, or even modeling AI after our own neurological makeup.

Shanahan says AI should be taught how to have human relationships, recognize and understand emotions, and feel empathy. He suggests that the way this should be done is to construct an artificial brain.

If we are expected to have empathy toward these humanoids, won’t they at one point have similar rights to humans? Well, one already does.

One of the most well-known robots Sophia, made by American firm Hanson Robotics, has been officially named a citizen of Saudi Arabia — the first country in the world to grant a robot the right to citizenship, giving it more rights than women in the country.

Then are governments of the future going to make humanoids equals? And will humans be able to control their emotions or will that be a humanoid rights violation?

Global groups like the World Economic Forum stand behind this. They state,

“Increasingly, we also want intelligent machines to be companions rather than just assistants. We can envision a future in which our household chores are carried out by robot servants who anticipate what needs to be done, in which our elderly and sick are taken care of by robot nurses, our children learn from robot teachers, and our drinks are served by a robot bartender who strikes up a friendly conversation. Stressed employees might even benefit from a session with the sympathetic robot psychoanalyst.”

They say, “To achieve this, we need to incorporate an ‘empathy module’ into the machines.”

There you have it. An AI expert and professor of cognitive robotics warns that we must program humanoids to have an artificial brain with the same emotions as humans, or they will kill us, the World Economic Forum is beckoning this reckoning upon the economy, a robot now has citizenship, and technology companies actually believe that human emotion and empathy can be imitated. Tell me this isn’t crazy.

Works Cited

Antuan Goodwin. “Honda debuts four very different robotics concepts at CES.” Roadshow CES. . (2018): . . http://cnet.co/2EcHYqr

Philip Perry. “Can AI Develop Empathy? .” Big Think. . (2017): . . http://bit.ly/2GIV0dU

Skye Mcdonald. “Truly empathic robots will be a long time coming.” Phys.org. . (2015): . . http://bit.ly/2GGvWUR

Astrid M. Rosenthal. “Neural correlates of empathy towards robots.” research gate. . (2013): . . http://bit.ly/2DVtZBW

Pascale Fung. “Can we ever build a robot with empathy?” World Economic Forum. . (2015): . . http://bit.ly/2E8qyLE

![[VIDEO] Drag Queen Drag Shows Are Now Infesting Churches](https://christianjournal.net/wp-content/uploads/2019/11/Screenshot-2019-11-22-at-9.02.01-PM-218x150.png)

![[VIDEO] Drag Queen Drag Shows Are Now Infesting Churches](https://christianjournal.net/wp-content/uploads/2019/11/Screenshot-2019-11-22-at-9.02.01-PM-100x70.png)